Updated 3/23/2026

Artificial intelligence is rapidly entering K–12 classrooms. From generative writing assistants to adaptive platforms that personalize instruction, AI is reshaping both student learning and classroom practice. But its rapid expansion has also raised concerns about academic integrity, data privacy, algorithmic bias, misinformation, overreliance on automation, and the potential displacement or deskilling of educators.

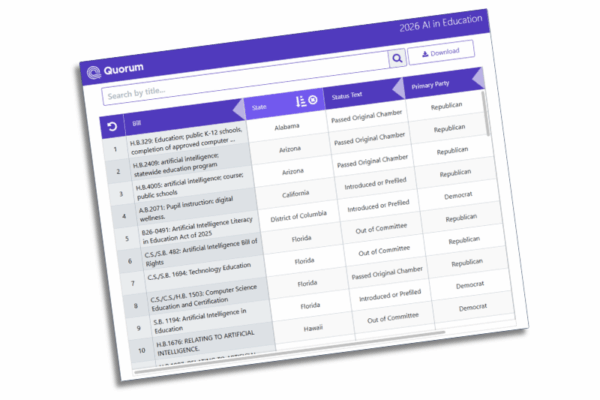

States are moving quickly to define how schools should respond. During the 2026 legislative session, FutureEd is tracking 53 bills across 25 states that address artificial intelligence in classroom instruction. While lawmakers are also considering broader AI-related issues such as youth online safety and consumer protection, this tracker focuses specifically on measures shaping teaching and learning: what students would learn about AI, how schools would integrate it into coursework, and what guardrails would govern its use.

Developments in Washington signal momentum as well. Early in his second term, President Donald Trump issued an executive order advancing AI literacy and classroom integration, and the U.S. Department of Education identified AI in education as a grantmaking priority.

With AI literacy set to be assessed for the first time on the 2029 Programme for International Student Assessment (PISA)—the global exam comparing the skills of 15-year-olds across participating countries—it is perhaps not surprising that policymakers are focused on preparing students to understand and responsibly use artificial intelligence. AI literacy could soon become a core competency in public education, encompassing how AI systems function, how to evaluate outputs critically, how to recognize bias and privacy risks, and how to use these tools ethically. How states act now will shape not only students’ readiness for the next decade of learning and work, but also whether U.S. students measure up to their international peers in emerging AI skills.

So far this session, three bills have been enacted:

- Idaho’s S.B. 1227 directs the state education department to develop a comprehensive generative AI framework addressing privacy, procurement safeguards, transparency, academic integrity, AI literacy standards, and professional development. Districts will be required to adopt aligned policies.

- Utah’s H.B. 218 establishes a required grade 7 or 8 digital skills course including AI literacy alongside cybersecurity and digital privacy.

- Utah’s H.B. 273 integrates AI into the state’s computer science standards and adopts broader digital literacy standards covering ethical AI use, information filtering, and critical evaluation of digital content. The law also authorizes supervised “AI sandbox” courses.

Other bills are continuing to move.

AI Literacy and Curriculum

A clear trend this session is the effort to embed AI literacy into the school day through graduation requirements, updated standards, standalone courses, or electives.

Several states would require AI-related coursework for high school graduation, most often embedded within computer science courses. In Iowa, multiple bills (H.S.B. 610, H.F. 2540, and S.F. 2094) would require students in the graduating class of 2030–31 and beyond to complete a semester of computer science and AI coursework covering foundational concepts, ethics, and societal impacts, while also strengthening teacher preparation requirements. Illinois H.B. 4411 would require a full year of computer science and AI beginning with students entering ninth grade in 2028–29, and Ohio H.B. 594 would require one unit of computer science, including AI content, for students entering ninth grade on or after July 1, 2029.

Other states would pair definitional updates with new credit requirements. Alabama H.B. 329 and Missouri H.B. 3139 would add AI to their definitions of computer science while establishing new graduation expectations—a full course beginning with Alabama’s class of 2032 and a half-unit in Missouri starting in 2027–28.

Notably, most of these proposals do not increase overall graduation credit totals. Instead, AI coursework would count toward existing math, science, CTE, foreign language, or elective requirements, depending on the state. State education agencies would generally be responsible for defining standards, approving qualifying coursework, and overseeing implementation.

Some states are taking alternative approaches. Hawaii’s S.B. 2212 would require all juniors and seniors to complete a six-week AI literacy course beginning in 2027–28, covering machine learning, generative AI, ethics, and culturally responsive content tied to state history. The bill would also establish a $5 million AI Education Grant Pilot Program for teacher training and curriculum development.

Beyond explicit graduation mandates, many proposals integrate AI into academic standards. Florida’s C.S./C.S./H.B. 1503 (and S.B. 1694) and New Jersey’s A.4352/S.2862 similarly would require AI instruction within computer science coursework, with Florida also requiring responsible AI application opportunities in general education courses.

Other proposals embed AI literacy through electives or other instructional models. Arizona H.B. 4005 would require instruction on the ethical, moral, and educational uses of AI—either as a standalone course or embedded within existing coursework—covering foundational concepts, effective prompt development, and responsible use. New Mexico H.B. 330 would require AI ethics to be offered as a computer science elective, while Hawaii H.B. 2466 would direct development of a statewide K–12 social media and AI literacy curriculum addressing misinformation, deepfakes, mental health, and responsible digital behavior.

Some proposals introduce AI earlier in students’ academic trajectories. South Carolina H.B. 4582 would authorize age-appropriate AI instruction statewide and direct the state education agency to issue guidance.

AI Governance and Oversight

A second major trend centers on governance: ensuring AI supports instruction without undermining educator roles, student privacy, or academic integrity.

Several states would impose statutory protections while requiring districts to adopt formal AI policies addressing acceptable use, data protection, transparency, and oversight. South Carolina’s H.B. 5253 would establish some of the strongest statewide guardrails, requiring written parental opt-in consent, annual public disclosure of AI tools and data practices, and prohibiting AI from replacing licensed teachers in core instruction or grading. The bill would ban automated high-stakes decisions without meaningful human oversight, restrict student profiling, impose strict data minimization and deletion requirements, prohibit commercial use of student data, and require school entities to adopt policies governing student use of generative AI for coursework. Compliance would be tied to participation in state-funded education programs, and parents would be granted enforcement rights, including the ability to seek injunctive relief or damages.

Similarly, Oklahoma’s Responsible Technology in Schools Act (S.B. 1734) would require every district to adopt a written AI policy before the 2027–28 school year. Policies would address approved and prohibited instructional uses, data protection and minimization, family transparency, and periodic review. The bill would also require educator-directed, human-in-the-loop AI use; prohibit AI from serving as the primary basis for grading, discipline, placement, or other high-stakes decisions; and preserve parents’ right to opt students out of student-facing AI tools without academic penalty.

Other states would establish state-developed frameworks that guide local policy. West Virginia’s H.B. 5205 would require the State Board of Education to develop model AI policies that would automatically apply to districts that fail to adopt compliant local policies by July 2027. The bill would also prohibit AI from independently making high-stakes student decisions, require safeguards against harmful or manipulative content, establish developmentally appropriate use guidance, and tie compliance to certain funding streams.

A smaller set of states are pursuing alternative models. Utah’s S.B. 322 would establish a five-year educational technology regulatory sandbox allowing voluntary pilot programs under strict privacy, safety, and human-in-the-loop requirements, with statewide expansion contingent on independent evaluation and legislative approval. By contrast, New York’s A.9190 would prohibit most classroom AI use below ninth grade, with limited exceptions.

Maryland’s Artificial Intelligence Ready Schools Act (S.B. 720/H.B. 1057) takes a more coordinated approach. The bill would require the State Department of Education to publish annually updated AI guidance for students, educators, and administrators; mandate district-aligned AI policies and the designation of local AI coordinators; establish a statewide AI Education Collaborative with annual reporting requirements; require university-supported evaluation and certification of AI tools; align procurement with state guidelines; embed AI literacy into workforce preparation standards; and implement compensated, statewide professional development through a train-the-trainer model.

Task Forces and Advisory Bodies

Not all states are moving directly to mandate coursework or impose regulatory guardrails. Some are establishing advisory structures to study AI’s instructional and ethical implications before adopting formal requirements.

For example, Hawaii’s H.B. 1676 would create an Artificial Intelligence in Education Task Force within the University of Hawaii to develop recommendations on equity, data privacy, professional development, and responsible implementation. Illinois H.B. 5113 would establish a temporary commission to develop best practices for AI use and related technology policies. And New York A.6972 would create a statewide AI Working Group within the State Education Department to develop integration recommendations.

We will continue to monitor and update the tracker as new bills are introduced and progress through the legislative process.